AI-powered document analysis with complex layouts

Initial situation

The analysis of complex texts has made enormous progress in recent years with large language models. However, it is repeatedly shown that processing print documents with complex layouts (columns, tables, graphics, etc.) presents a challenge. Often, only the final documents are available in PDF format, making the extraction of document content a prerequisite for further use for other purposes.

Manual text extraction is associated with high effort on a case-by-case basis and does not allow for reacting to market developments with sufficient speed. Therefore, automatic processing of documents is required, which makes the content (especially the text) from the documents available quickly and with high quality.

Solution idea

Previous approaches to document analysis require several steps, including layout analysis, which distinguishes document regions for text, images, and other content, followed by the actual extraction of text from the regions. Current tools and frameworks combine these steps and deliver the desired results in a single pass. Vision Language Models (VLMs) are used for this purpose, among others.

The project utilized the Docling, Qwen, and YOLO tools to extract content from documents with complex layouts. In addition to output quality, resource requirements were also considered, which can vary greatly. The number of GPUs and VRAM size are particularly crucial here. Processing times vary significantly depending on the tool. With Docling, processing times of between 1 and 2 seconds per double-page spread could be achieved on a high-performance server with 4 Nvidia DGX A100 GPUs.

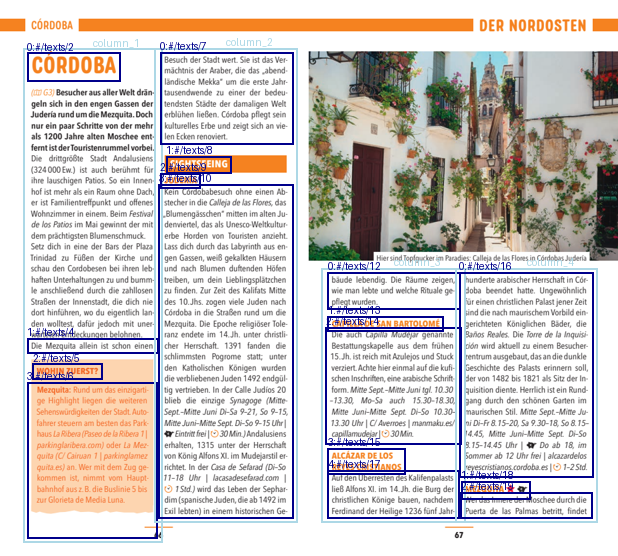

As a test set, 8 documents with a total of 30 double-page spreads were compiled from several travel guides. The pages have different layouts and structures, covering many typical document and layout structures. The ground truth was created manually from the pure flowing text of the documents, which could then be used to evaluate the quality of the results.

Benefit

In addition to the desired text content (headings and body text), the documents contain many other structures such as headers/footers, text boxes, images, special characters, explanations, and legends, which must be considered during analysis and distinguished from the actual text.

Normal text and image regions are recognized very reliably. Post-processing is required for certain structures such as text artifacts (special characters), headers/footers, and text blocks in image regions. Special attention should be paid to the reading order of the text regions, which is only partially captured correctly and requires its own post-processing logic.

The results of the analysis and automatic post-processing were evaluated using ground truth texts. Similarity measures based on Levenshtein Matching Blocks were used. The matches range from 82 to 98 percent, depending on the test document. For the documents with the lowest matches, it is easy to understand which document structures are problematic for extraction. Specifically, these are, for example, elaborately designed timelines and large images in multi-column layouts. These structures can be taken into account even better in both visual analysis and post-processing. However, insights into which document structures lead to problems are also important, which can be considered in the future when creating the documents.