Explainable AI: Evaluation of explainability methods in the application

Initial situation

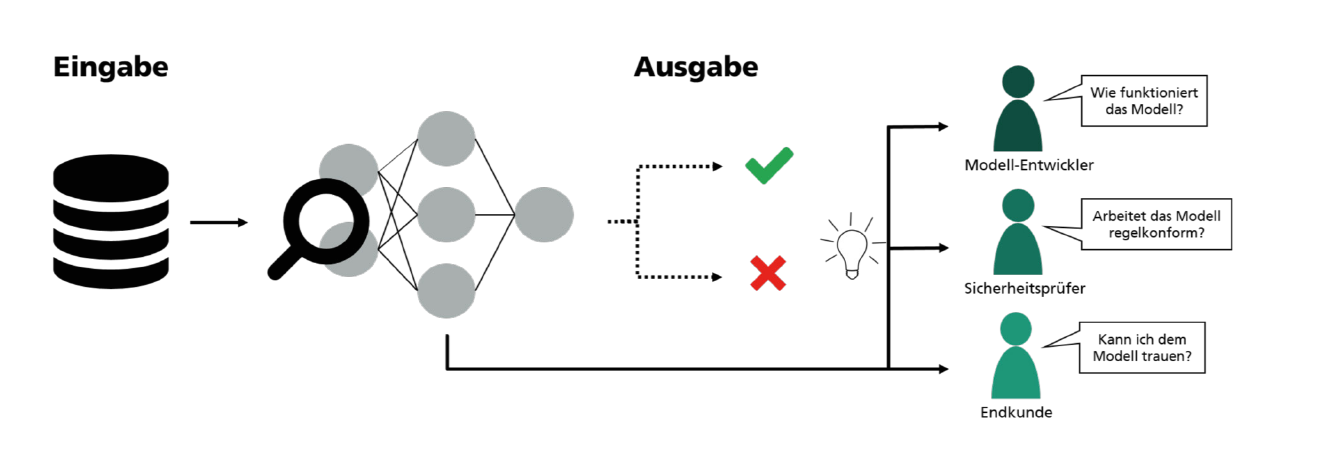

In many applications, the use of AI processes offers enormous potential. However, the high predictive accuracy of AI models alone is often not sufficient, for example when using AI in highly regulated sectors such as the pharmaceutical industry. Here, the AI models used should at best be comprehensible. One solution to this is explainable AI (XAI) methods, which can be used to interpret AI models and their decisions.

So far, however, these approaches are still very close to research. This means that XAI techniques are often only tested on publicly available benchmark data sets. In addition, few techniques have been developed to date that can be used to evaluate the XAI methods themselves and the explanations generated. All in all, there is a lack of practical examples and approaches for evaluating the suitability of XAI methods in real use cases.

Explainability methods in application

This is precisely where the project comes in. The project focuses on the methodological concept for the use of XAI procedures for real-world applications. On the one hand, the explanation of AI models and their predictions will be considered. In addition, further attention will be paid to evaluating the safety of model decisions.

The following questions are addressed in the project:

- What are the current limitations to the use of AI in highly regulated industries?

- What are the requirements for the traceability of AI models?

- Which XAI methods are suitable for which application scenarios?

- How can the use of XAI methods be accompanied and evaluated in real use cases?

The results of the joint work are best practice approaches in the form of a process model for the use of explainability approaches for real use cases. The process model will also be tested as a prototype as part of the project.

Target group

The project "Evaluation of explainability methods in application" is open to all business partners from Baden-Württemberg who are interested in the comprehensibility of AI models and would like to actively develop a competitive advantage. Ideally, your company already has use cases in which AI is used productively. For you as a partner, participation in the project has numerous advantages:

Knowledge advantage

- through exclusive research results

- by building a network of experts and users

- through best-practice approaches

- for strategic early alignment

Communication

- Strong public perception

- Innovation leadership

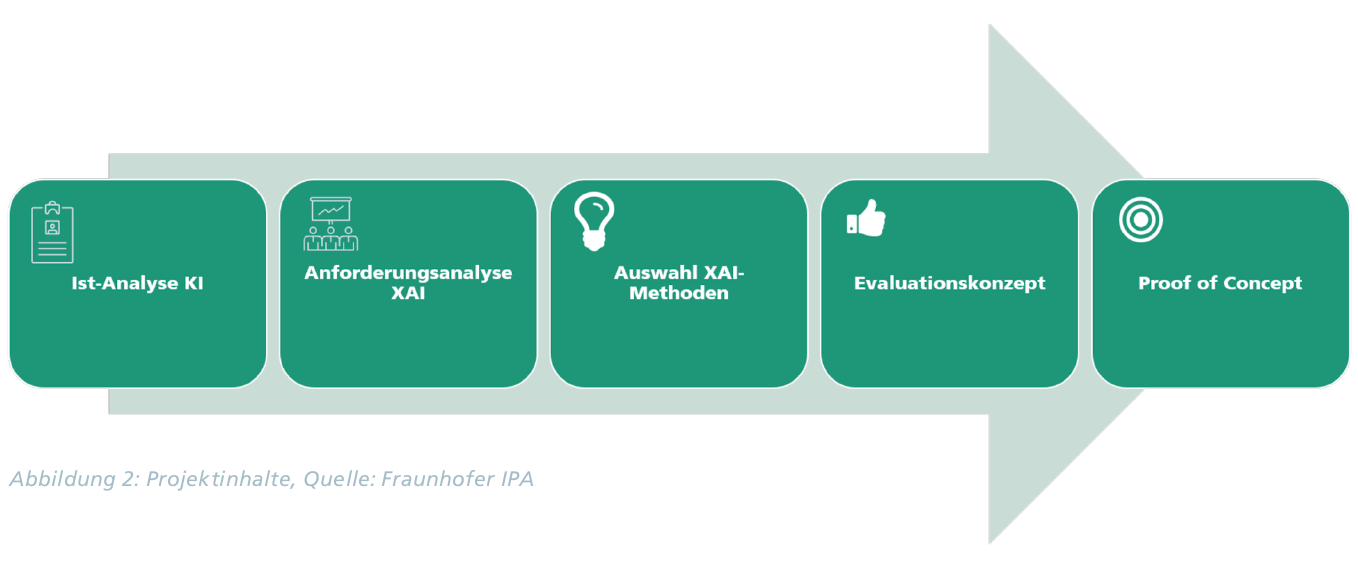

Project process

As shown in Figure 2, the first step is to hold a workshop to determine whether AI applications are already being used in your company but cannot be used to their full extent due to regulatory limitations. Furthermore, AI use cases should be developed that are suitable for the project format if AI is not yet being used productively in your company. In the next step, the requirements analysis for the XAI methods and their generated explanations is derived from the selected use case. The selected methods for extracting explanations from the AI model are then adapted to the use case and evaluated with regard to the previously defined requirements. The project is finalized with a proof-of-concept that generates fully meaningful and comprehensible explanations for the selected use case and thus makes the AI application more transparent and trustworthy.